To understand brain function and cellular-level computations you need to be able to control and measure neural signals across extensive networks that span large brain regions. For instance, signals in the visual cortex are processed in other brain regions before they are turned into visual perception.

Optical techniques have proven highly effective in studying neural signals at the cellular level. Today, it is possible to stimulate and measure activity via photosensitive microbial opsins and genetically introduced fluorescent probes. When looking deep into the brain, the standard method is to use ultrashort laser pulses with a two-photon microscope. Commercially available systems can image and stimulate the same Field Of View (FOV) in relatively small brain regions.

Recent advancements in two-photon mesoscopes have extended the FOV and made it possible to image brain regions as large as 25 mm². Until now, mesoscopes only let us measure neural signals; we cannot use mesoscopes to stimulate. If we could stimulate one area while observing inter-areal signals in other regions, we could gain insight into feed-forward and feedback processing in distributed neural networks with single-cell precision. To make this possible, a team at UC Berkeley has developed a platform that combines our aeroPULSE FS50 ultrafast fiber laser for photostimulation with a two-photon mesoscope (Thorlabs Inc.) featuring a 5 mm x 5 mm FOV.

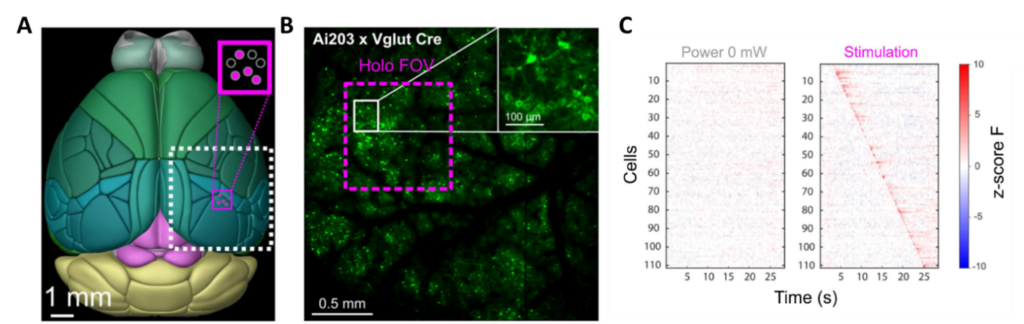

Dr. Lamiae Abdeladim and Professor Hillel Adesnik have developed this all-optical read/write platform that stimulates a 1 mm² area while recording activity in a 25 mm² region (see Figure 1).

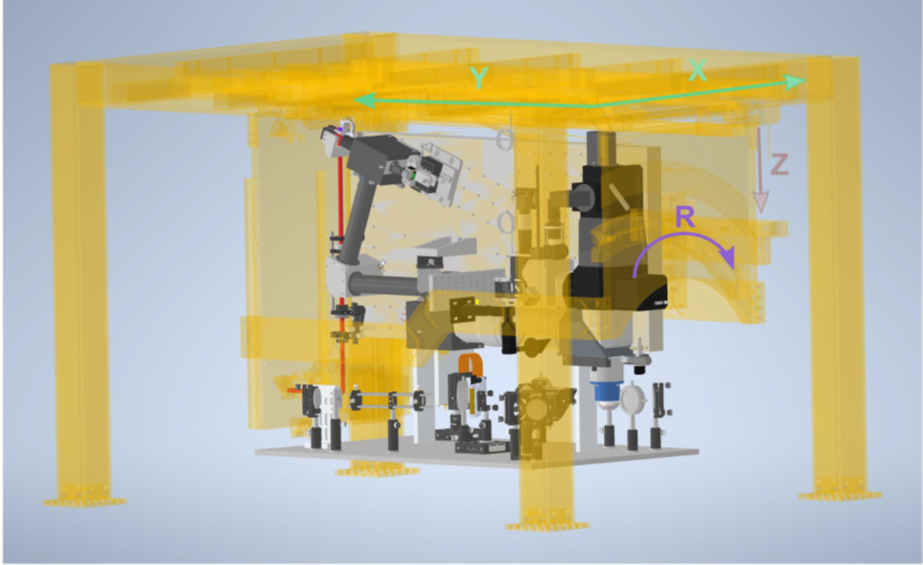

They redesigned the 3D holographic module for temporal focusing, fitting it onto an extension breadboard attached to the movable main frame of the microscope (see Figure 2). In this design, the holographic module remains invariant to the dimensions controlled by the motorized microscope frame such as X, Y translations, and rotation. Additionally, the new photostimulation path does not compromise the wide FOV of the mesoscope or its necessary movement capabilities for various biological imaging tasks.

Transforming neuroscience with two-photon holographic mesoscope technology

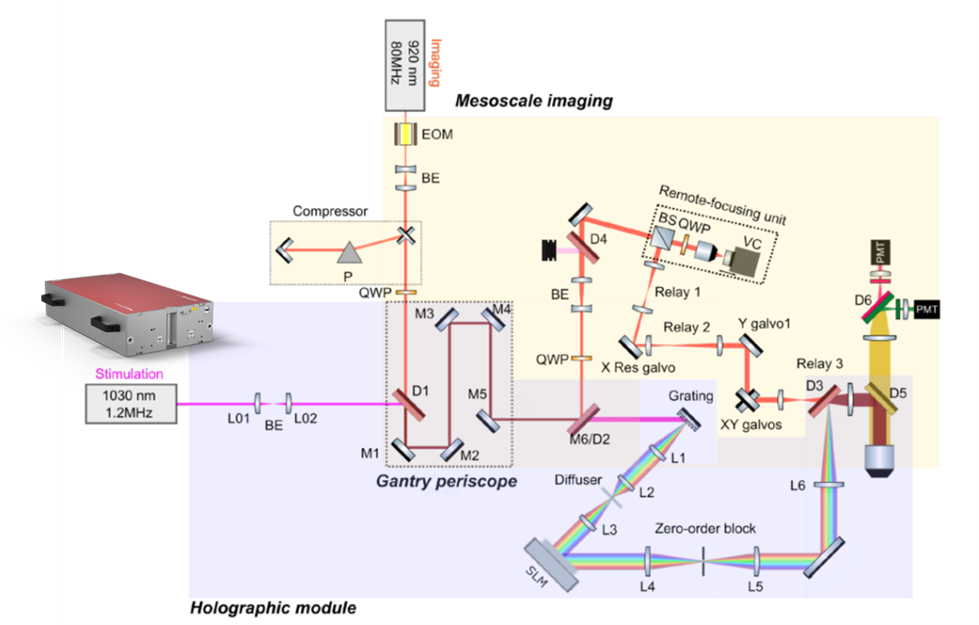

Figure 3 illustrates the 3D holographic module utilizing 3D-SHOT (three-dimensional Sparse Holographic Optogenetics with Temporal focusing). The femtosecond pulses from the aeroPULSE FS50 enter collinearly with the imaging laser at 920 nm. Both beams are separated using a long-pass dichroic beam-splitter (M6/D2). The optics required for 3D-SHOT are positioned on the breadboard, which moves along with the entire scope’s rotation. The two beams are recombined using another dichroic (D3) before entering the objective path.

The authors discuss how a two-photon holographic mesoscope has the potential to revolutionize neuroscience. It allows for precise control and monitoring of neural activity, offering valuable insights into brain function, synchronization, and communication between brain areas.

Additionally, it shows promise in enhancing brain-machine interfaces by optimizing signal transmission with single-cell resolution and millisecond precision, which can improve, e.g., prosthetic limb control. The wide FOV of the mesoscope extends its usefulness to larger-brained species and facilitates new unique experiments. This makes it an important technology in systems neuroscience, enabling perturbative experiments and advancing our understanding of brain function.

Conclusion

In conclusion, mesoscale two-photon holographic optogenetics is destined to be a pivotal technology in systems neuroscience. It can empower groundbreaking experiments, allowing us to explore theories of brain function that were previously beyond our reach. With the combination of the aeroPULSE FS50 and a mesoscope, the researchers have created an unprecedented tool for studying neural activity across various scales and species – a powerful technology with great potential for advancing our understanding of the brain and its complex functioning.

More information

You can find the results of this study on bioRxiv, and the preprint is available at https://doi.org/10.1101/2023.03.02.530875.